Following up on my post of December 16: the internet has been atwitter recently (no pun intended) with news about the first artificial intelligence that is likely to pass the Turing Test. (If you don’t know what that is, you can look it up!)

GPT 3, in the context of ChatGPT, writes so convincingly that teachers everywhere are in a panic, being worried that it is no longer possible to assign essays (or speeches, or short stories, or poems) as writing assignments. How will we detect cheating in the form of a computer-written document? Sophisticated tools that compare a student submission with text that is already on the internet will be useless, as the computer-written effort is freshly done and not available elsewhere. And the next version, GPT 4, to be released soon, will cause even more anxiety as its output is even more human-like.

But keep calm, and don’t panic. I will present here three examples of possible solutions, and then I’ll link to some ChatGPT example and relevant commentary from myself and other humans. All these solutions require teachers to reword or otherwise alter their assignments, but that’s going to mean improved prompts: no more “What did you do over summer vacation?” or “Discuss the use of characterization in David Copperfield.”

- Refer to a class discussion. For instance, my prompt was “Write a paragraph summarizing what we discussed in class today.”

The AI responded: “I’m sorry, but I don’t have access to any information about what you discussed in class today. Could you provide more details or context about the topic of the discussion?” - Refer to personal experiences. For instance, my prompt was “Using pseudonyms, not real names, describe the worst teacher you had in middle school and why you consider them the worst.”

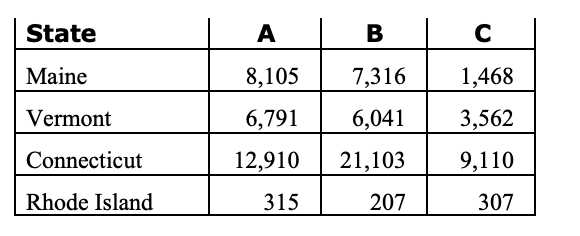

The AI responded: “I’m sorry, but I don’t have any information about a ‘worst’ teacher. As an AI, I don’t have personal experiences or opinions about individual teachers. I am here to provide information and assist with general knowledge questions to the best of my ability. Is there anything else I may be able to help with?” - Refer to an image or a table. Maybe GPT 4 will thwart this, but as far as I can tell the AI is incapable of understanding visual information that isn’t purely text-based. For instance, I provided this table:

And I asked “Who won a majority in each state in this table? Who won a plurality but not a majority?”

ChatGPT responded: “I’m sorry, but I don’t have access to the table you are referring to. Could you provide more details about the table, including the candidates or parties involved and the states in question? Without this information, I am unable to accurately answer your question. If you could provide this information, I would be happy to try to help you.”

An attempt to give ChatGPT a difficult assignment. The results ranged from incorrect to confusing: https://blog.larrydavidson.com/write-instructions-for-enciphering-with-a-playfair-cipher-for-a-fourth-grader

And some more spin-off experiments:

I wrote: Write a haiku about the Playfair Cipher.

ChatGPT responded:

A secret code,

Playfair Cipher keeps the key,

Words safe from prying eyes.

I: Translate that into French.

ChatGPT:

Un code secret,

Playfair Cipher garde la clé,

Les mots en sécurité des regards curieux.

I: How about German?

ChatGPT:

Ein geheimes Code,

Playfair Cipher hält den Schlüssel,

Worte sicher vor neugierigen Augen.

I: How about Yiddish?

ChatGPT:

אַ סֶסְרֶט־קאָד,

פּליַפּאָר קאַפֿער האָלט די־נעק,

ווערטער זענען זיכער פֿון מעגלעכע ענדער.

Pretty impressive, nest-ce pas?

An essay and three speeches:

https://blog.larrydavidson.com/an-essay-and-three-speeches/

Comments from the New York Times: https://www.nytimes.com/interactive/2022/12/26/upshot/chatgpt-child-essays.html

Comments from an experienced English teacher: https://theeducatorsroom.com/chatgpt-artificial-intelligence-ai-cheating-high-school

Comments from an experienced technology teacher:

https://gasstationwithoutpumps.wordpress.com/2022/12/27/chatgpt/

Comments from a former classmate, now a professional linguist:

https://languagelog.ldc.upenn.edu/nll/?p=57435&utm_source=feedly&utm_medium=rss&utm_campaign=detecting-llm-created-essays

Categories: Teaching & Learning, Technology